08 September 2020

This is the second of a 5-part series on AWS exploits and similar findings discovered over the course of 2020. All findings discussed in this series have been disclosed to the AWS security team and had patches rolled out to all affected regions, where necessary. A big thanks to my friend and fellow Australian Aidan Steele for co-authoring this series with me. This post was written by Aidan.

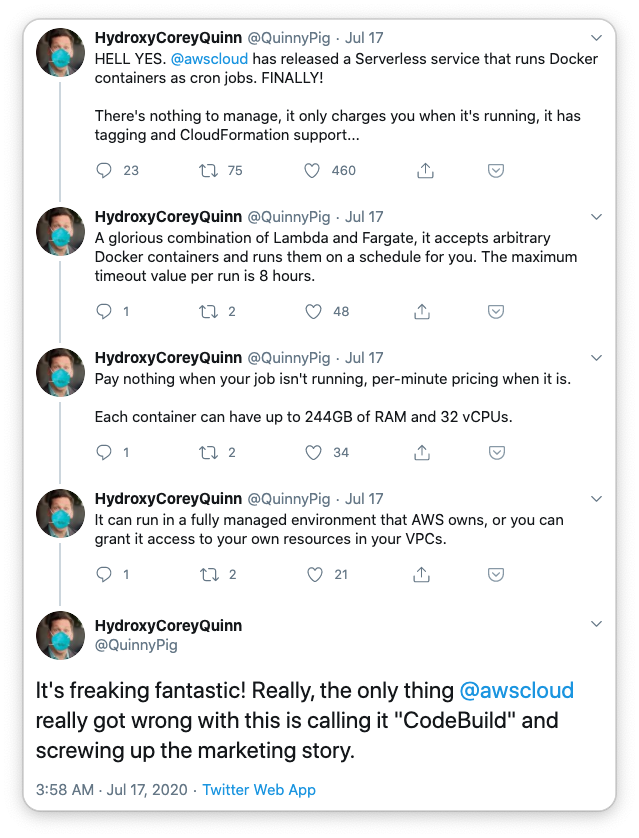

CodeBuild is a fully managed continuous integration service that compiles source code, runs tests, and produces software packages that are ready to deploy according to the official product page. Unofficially, it’s just a great serverless way to run arbitrary Docker images and scripts if you ask @QuinnyPig.

CodeBuild has a lot of configuration options to cater for just about every use case. One of those options is “privileged mode”, equivalent to the --privileged flag when executing docker run. It’s often said that a privileged container is essentially as good as no container at all, security-wise. Which brings us to an interesting question: can you break out of the CodeBuild container? Where will you end up?

So how should we break out? Lets start by looking at some of the things that privileged containers can do that regular containers can’t. One such action is the ability to mount devices. What devices are already mounted? This is (an excerpt of) the output from running mount:

proc on /proc type proc (rw,nosuid,nodev,noexec,relatime)

...

cgroup on /sys/fs/cgroup/blkio type cgroup (rw,nosuid,nodev,noexec,relatime,blkio)

...

/dev/nvme0n1p1 on /codebuild/output type ext4 (rw,noatime,data=ordered)

/dev/nvme0n1p1 on /codebuild/readonly type ext4 (rw,noatime,data=ordered)

/dev/nvme0n1p1 on /codebuild/bootstrap type ext4 (ro,noatime,data=ordered)

...

Those are interesting! If we poke around in those /codebuild directories we can see what looks like the files needed by the CodeBuild agent itself running in the container. But they must have been mounted from the host. What other files are on the host? We can check by running mkdir /host && mount /dev/nvme0n1p1 /host. Now lets take a look (again cutting the boring stuff):

# ls -l /host

total 156

dr-xr-xr-x 2 root root 4096 Aug 13 21:07 bin

drwxr-xr-x 11 root root 4096 Aug 13 21:04 cgroup

drwxr-xr-x 2 root root 4096 Sep 2 01:45 codebuild-local-cache

-rwxr-xr-x 1 root root 31083 Sep 2 01:44 install

dr-xr-xr-x 7 root root 4096 Apr 27 20:53 lib

drwxr-xr-x 2 root root 4096 Apr 27 20:53 proc

dr-xr-x--- 7 root root 4096 Sep 2 02:15 root

dr-xr-xr-x 2 root root 12288 Apr 27 20:53 sbin

drwxrwxrwt 5 root root 4096 Sep 2 02:29 tmp

drwxr-xr-x 13 root root 4096 Apr 27 20:53 usr

drwxr-xr-x 18 root root 4096 Apr 27 20:53 var

# ls -l /host/tmp

total 1632

-rw-r--r-- 1 root root 2054 Sep 2 01:45 cts.normal.json

-rw-r--r-- 1 root root 5 Sep 2 01:45 cts_socat.pid

-rwxr-xr-x 1 root root 370 Sep 2 01:45 ensureInstance.sh

-rw-r--r-- 1 root root 5 Sep 2 01:45 fe_socat.pid

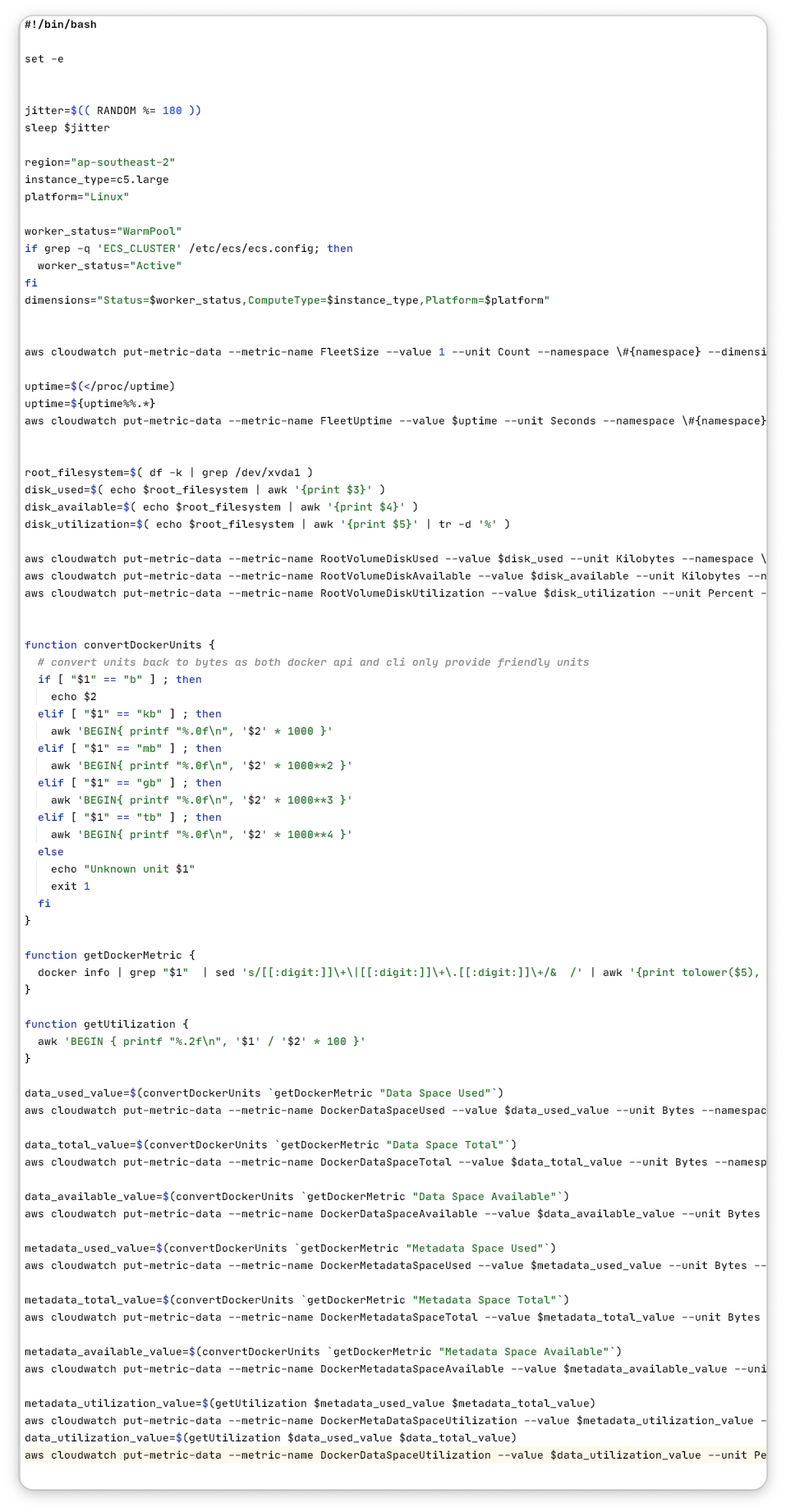

-rwxr-xr-x 1 root root 4318 Sep 2 02:16 monitor.sh

-rw-r--r-- 1 root root 1634011 Sep 21 2017 parallel-20170922.tar.bz2

-rwxr-xr-x 1 root root 3202 Sep 2 01:45 provision.sh

-rwxr-xr-x 1 root root 682 Sep 2 01:45 socat_script.sh

drwx------ 2 root root 4096 Sep 2 01:44 tmp.MOeHFIUJkF

The script in /host/tmp/monitor.sh isn’t super interesting in itself - it’s just putting some metrics in CloudWatch for use by the CodeBuild team. But it does look like it terminates, rather than running forever. So there must be something running it on a schedule. Maybe a crontab?

So we go poking around and sure enough, /host/etc/cron.d/buildfleetmetrics runs that script every 5 minutes: */5 * * * * root /tmp/monitor.sh. Do you know what that means?

That means we can edit /host/tmp/monitor.sh, wait up to 5 minutes and tada: we’re now running our own code on the underlying EC2 instance - I knew serverless had servers!

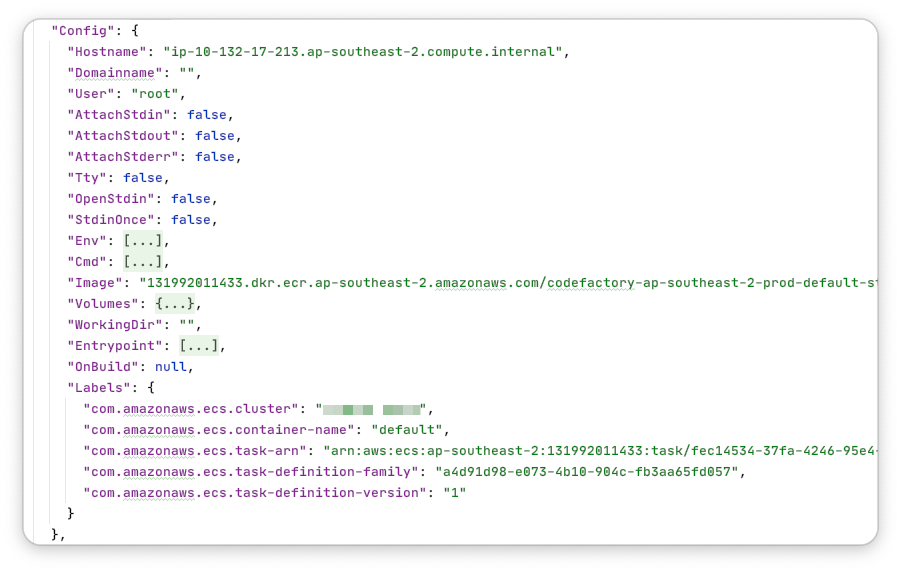

Here are a few observations that I made about the EC2 instance once I got the chance to poke around:

Given that I had free rein over an instance in an AWS-managed account, I thought two things: a) surely the CodeBuild team are aware of this possibility, but also b) I should probably report it just in case. So I reported it on June 5th and got the first response less than five hours later. I got another email on the 11th of June letting me know that they haven’t forgotten about me and will aim to get back to me soon. I got the final response on June 18th and it was quite informative! Here it is:

Though Codebuild makes use of containers, the containers do not constitute a security boundary of the Codebuild service. As part of Codebuild's design, instances responsible for running customer jobs are isolated from those belonging to other customers. Security Groups are further used to isolate instances at the network level. The credentials you described are scoped appropriately to the instance where they were obtained and do not grant additional access beyond that instance.

To be honest, I’m pretty happy with that response. I already trust the isolation between customers provided by EC2 and ECS and I don’t have any reason to doubt them with CodeBuild thrown into the mix. It was also nice to get that level of insight shared freely!

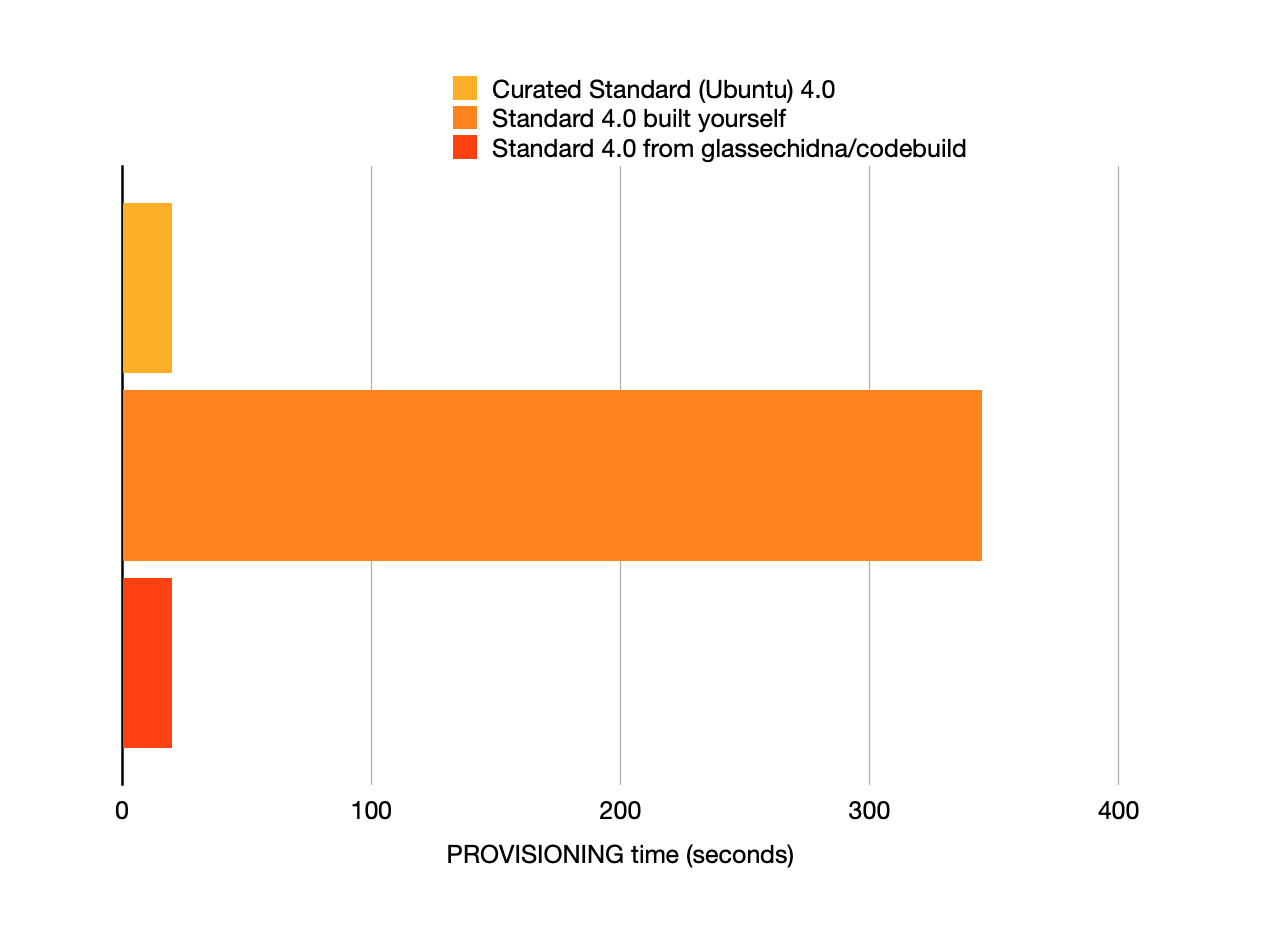

So that’s the story of the compromise that wasn’t. I thought I might have had something, but the clever folks over at AWS considered it as part of their design. And in the end I got what I wanted: a copy of the Docker images that CodeBuild use for their “curated environments”. They’ve provided the Dockerfiles for some time, but not the layers - and those are what I wanted for the reason in the following chart. I likely never would have gone on this escapade had they been available! But here we are and if you want them too, I put them on GitHub.